Machine Learning is a complex subject based on many compound, complex leanings performed over time by many people smarter than most.

There are a number of visual editor tools that try to bridge the learning curve by focusing on certain parts of the process or gamifying the workflow to ease users in to the concepts gently. We know there’s a huge market wide open for taming complex, powerful ML workflows with easy to use, visual tools empowering business and data analysts to do data science (after all, they know their own domain better than most!). A good example of this is DataRobot at the Enterprise level.

Today I came across this site called “Teachable Machine” made by some smart people and built with Tensorflow.js. It lets you build and train simple models on sound, video and images and then you can export your models in various formats to integrate with your own app or system! I have done something similar with Microsoft PowerApps and the AI Builder, but this takes it to a new level.

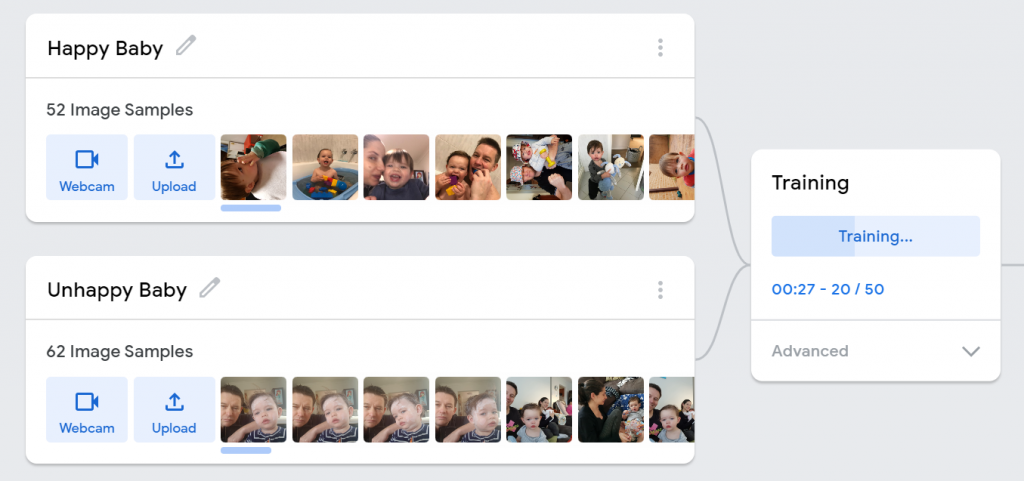

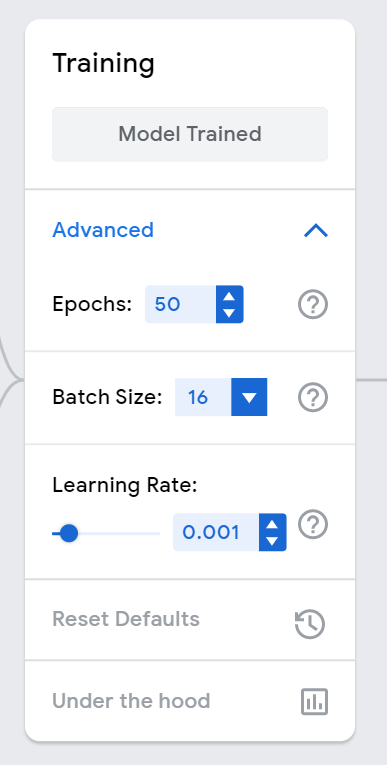

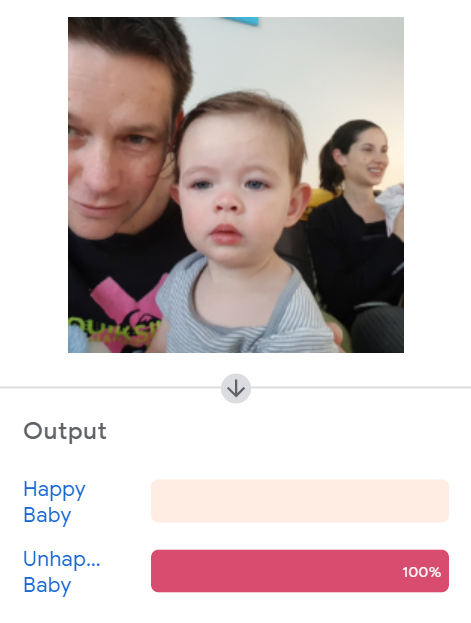

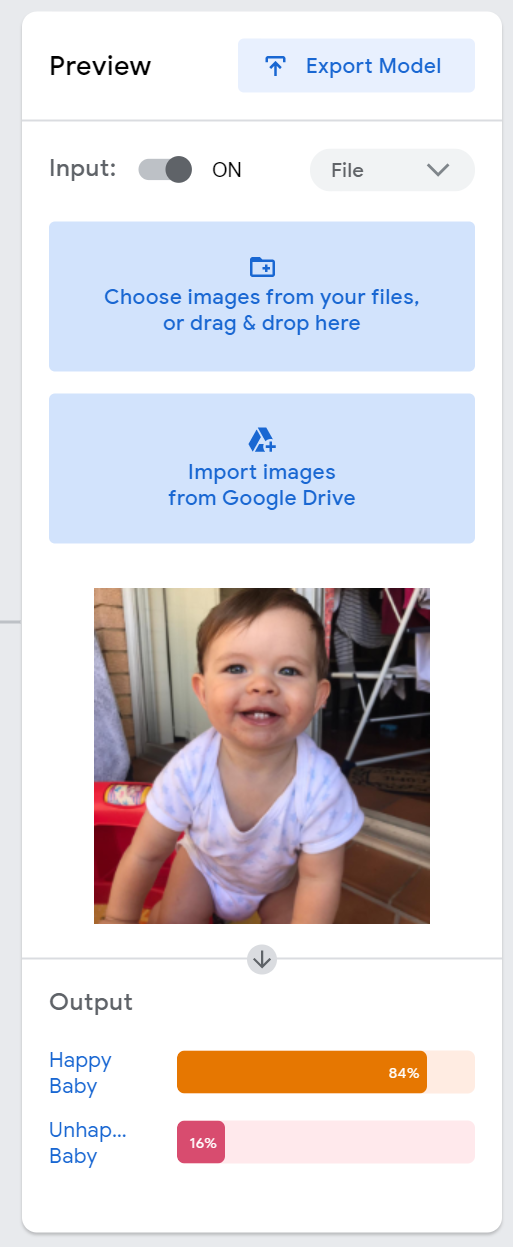

My poor son has been teething lately so I decided to do a very simple binary classification model – Is Baby Happy or Unhappy. Normally I use visual cues for this (crying for example) – however for this point of this exercise I decided to use photos I had just download from my phone. I wanted to tell if I could point my camera at my son and have it tell me if he was unhappy or happy. This of course would probably make him even more unhappy as he would want my phone, so there’s probably a bit of bias in there… Of course, when my wife sees this there will be Unhappy Wife too.

As always, the quality of the model depends on the quality of the data AND the amount of data you train on. I wasn’t expecting miracles but it worked well with 50 images for the Happy and Unhappy classifications. Once you start playing around with other images you start to understand the magnitude of the challenges ahead of us with AI – for example, I trained the model on my son’s face, but really it should be able to work on any baby, male or female. What if there are two babies in the pic? Etc. etc. It’s all about the data as usual.

I documented the steps for rather pointless example however this video from the site itself really shows off how powerful this service is!

https://data-driven.com/wp-content/uploads/2019/11/prediction1.mp4

Video courtesy of Teachable Machine

I am a fan of #Microsoft Azure Machine Learning Studio (and the visual editor in the Azure Machine Learning service) – especially for quick ML experiments – you can drag and drop modules about and do data prep, transformations and ML without writing much code (you can drop into R, python or SQL if need be). IMHO it’s great for experimentation and exploration but it also requires some knowledge of ML concepts to know what order to do things in (e.g. ingestion, training, scoring, hyper param tuning etc). At some point you graduate to a coded solution for more complex ML or to build it into your analytics flows (Databricks would be my preferred tool of choice here).

Teachable Machine takes it a step further (almost like SaaS versus PaaS) and narrows down the amount of options to let you focus on quick results. Oh and it’s great fun if you have a webcam! As in many products, it’s the User Experience that makes all the difference here (to most users, the UX IS the product). It’s super simple and easy to either load your images or use your webcam (by far the best option). Click on Train and then test your model with a new pic or live on the webcam!

They’ve even been so kind as to share their code if you really want to get your hands dirty – http://github.com/googlecreativelab/teachablemachine-community/

To summarise – if you want a fun introduction to Machine Learning then check it out here: https://teachablemachine.withgoogle.com/