Home - Agentic AI in Fabric - Microsoft Fabric Data Agents Are Now GA

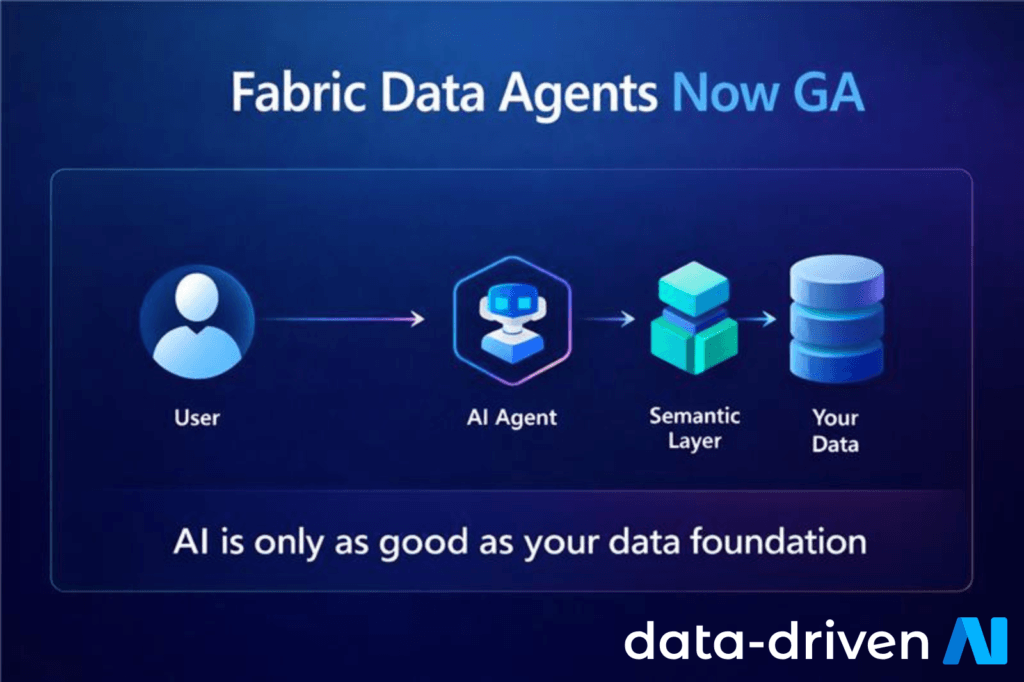

Microsoft Fabric Data Agents are now generally available (GA), and the impact extends well beyond a new chat interface.

GA signals that conversational access to governed enterprise data is moving into production expectations — including support, monitoring, and trust. Business users can ask questions in natural language while existing permissions and security boundaries still apply.

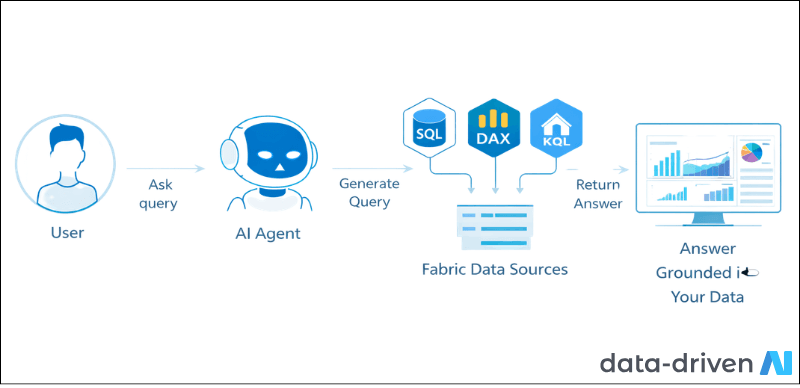

The agents translate natural language into executable queries, then run them against approved Fabric sources – lakehouses, warehouses, Power BI semantic models, and SQL endpoints. Each agent interprets intent, selects sources, generates SQL, DAX, or KQL, and returns an answer grounded in your data.

Because Data Agents operate on your published models, semantic quality becomes the deciding factor. Clear naming, relationships, and measures make query generation more reliable. Inconsistent modelling increases ambiguity and reduces trust.

Once the feature reaches GA, organisations start planning real usage at scale – training, governance, and operational ownership. It becomes part of how the business interacts with the platform.

The practical upside: repetitive requests like “Can you pull this number?” drop significantly. When agents answer well, engineers and analysts can focus on pipelines, quality controls, and model improvements.

For a deeper look at building strong semantic foundations, see our guide on Semantic Layer Best Practices. read more about Semantic Layer.

Treat these agents as an interaction layer on top of strong engineering. Dimensional modelling, validated measures, and consistent definitions still matter. The agent makes those foundations more valuable – it does not replace them.

Read more on Dimensional Modelling in Microsoft Fabric.

The Engineering Reality Check

AI does not fix poor data models — it exposes them. When more people can query data in plain language, weak foundations surface quickly in support effort, cost, and confidence.

If a stakeholder challenges a number, your team needs to trace the prompt, the generated query, the source model, and the model version. Building observability into your adoption plan is the difference between a pilot and a production capability.

Standardise naming and documentation

Strengthen semantic relationships

Put cost guardrails in place

At Data-Driven AI, we help organisations succeed with Fabric Data Agents by strengthening the foundations they rely on – semantic model standards, governance patterns, and production-ready Fabric architecture.

If you are exploring this feature, start with a semantic layer review and a rollout plan that covers monitoring, ownership, and user guidance. Talk to Our Team Today.

Microsoft Fabric Data Agents can accelerate access to insights, but they also make platform quality visible. The safest path is to harden one domain, prove governance and cost controls, then expand access deliberately.